Adaptive Joins and Memory Grants in SQL Server

Adaptive joins let the optimizer choose between a Hash Join and a Nested Loop join at runtime, which can be fantastic for performance when row count …

Read More

Before you do all the work to map out a complex sliding window table partitioning scheme for your SQL Server tables, here’s the top five questions I’d think through carefully:

Some folks think they need partitioning for performance– but it really shines as a data management feature. Just because you’ve got tables with millions of rows in them doesn’t necessarily mean that partitioning will make queries faster. Make sure you’ve worked through traditional indexing and query re-writes first. Partitioning is lots of work, so don’t skip this question.

Bonus: traditional indexes don’t require Enterprise Edition like partitioning. And even if you do eventually go down the partitioning route, you’re going to need to be familiar with the top queries that hit the table for tuning later, anyway.

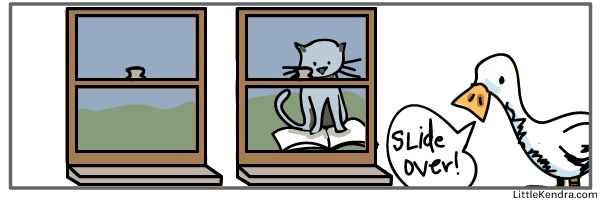

With “sliding window” table partitioning, you regularly add new boundary points and partitions and “switch in” recent data. Similarly, you’re regularly switching out older data and removing older boundary points.

Even with regular data loads, some patterns work well with a “rotating log buffer” pattern, which doesn’t require constantly modifying your partition function and scheme. Read more about it on Thomas Kejser’s blog here.

Many of us have written tutorials showing sliding window partitioning where each partition sits on its own filegroup. When you add new boundary points, you add new filegroups. That’s extra scripting, and extra places where things can go wrong. Do you really need it?

There’s a few benefits to using lots of filegroups, but not everyone can take advantage of them (or needs them):

Important: whatever you decide, I encourage you to not put everything in the PRIMARY filegroup. If you’ve got enough data that you need partitioning, you should have at least one additional filegroup for managing restores in worst case scenarios.

Map this out before you write the code. When will the jobs run, and what should happen if they fail? Should someone be engaged? What tools will they need, and when is the Service Level Agreement for when the process has to be complete? You’ll need lots of details on this to make sure your automation and documentation meet the bar.

I’m cheating on the numbering a little, but this is related to job failure. SQL Server 2014 added two really nice features for table partitioning:

These two features are a huge reason to be on SQL Server 2014 or higher for any table partitioning implementation.

If you can get to SQL Server 2016, it adds partition level truncation. (Yay!)

Every sliding window partitioning implementation should have a secondary job or monitoring system checking state with a really obvious name, like “DBA - Critical Partition Monitoring.” The job should use logic to check and make sure that the right number of boundary points exist.

It’s very, very common for the jobs which automate table partitioning to be disabled, and accidentally not re-enabled. That leads to “lumpy” partitions which are a big pain to fix. Don’t let it happen to you.

I’d love to hear what you’ve learned from your implementations, too.

Copyright (c) 2025, Catalyze SQL, LLC; all rights reserved. Opinions expressed on this site are solely those of Kendra Little of Catalyze SQL, LLC. Content policy: Short excerpts of blog posts (3 sentences) may be republished, but longer excerpts and artwork cannot be shared without explicit permission.